As a part of Sean Andersson’s Robotics Lab at Boston University, a friend and I spent a summer researching ultrasonic audio signals, and their role in compressive sensing for robotic applications.

Compressive sensing is a signal processing technique that allows for signals to be reconstructed from a small number of samples. In our case, we used under-sampled ultrasonic echos to describe or ‘hear’ psychical spaces, so that robots can ultimately create maps to navigate new environments. This process of constructing or updating a map of an unknown environment while simultaneously keeping track of one’s own location within it is known as simultaneous localization and mapping (SLAM).

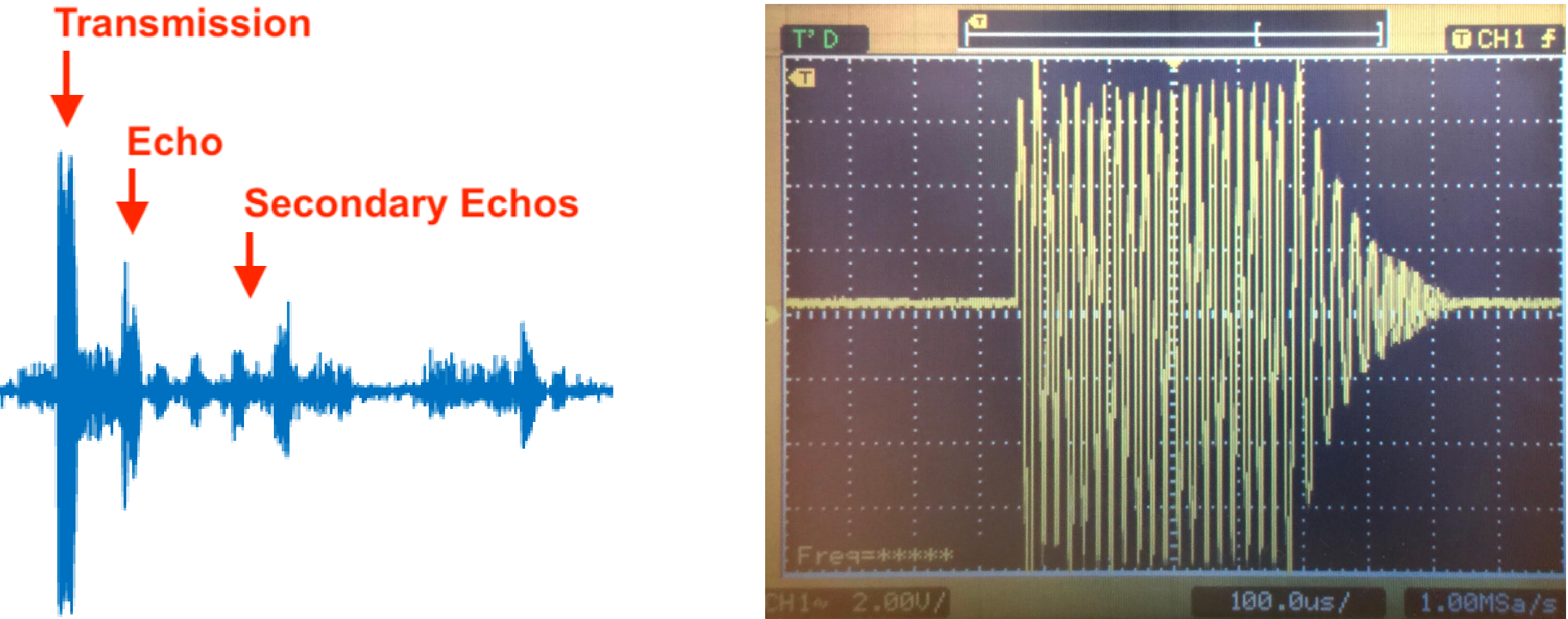

My project focused primarily on signal acquisition, or more specifically, generating ultrasonic pulses and capturing the return echo as they ‘bounced’ off of various objects in our controlled test environment.

We spent the summer building and testing equipment used to transmit and capturing audio signals as well as the physical structures that made up our experimental configurations.

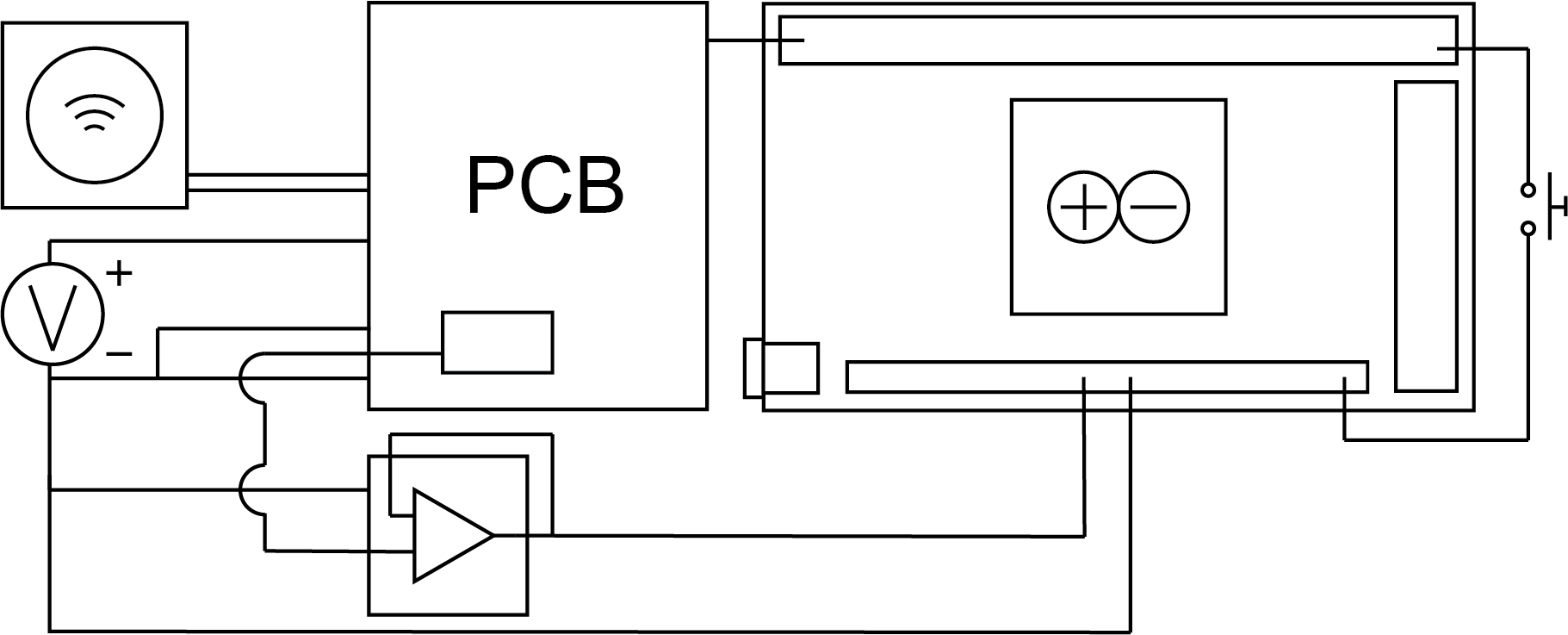

The basis of the sending-and-receiving module used was a sonar transducer, and a breakout PCB that we used to trigger ultrasonic pulses. To record the returning echo signals, we used an Arduino Due microcontroller to read an analog signal from the breakout board. We specifically chose the Due because we could overclock it to read at a sampling rate of 1 MHz, which was imperative in capturing the 50 kHz echo signals (see Nyquist rate).

At first, we discovered the incoming echo frequencies were being clipped above 3.3V (limited by the Due), so we included an op amp to scale down the signal voltage.

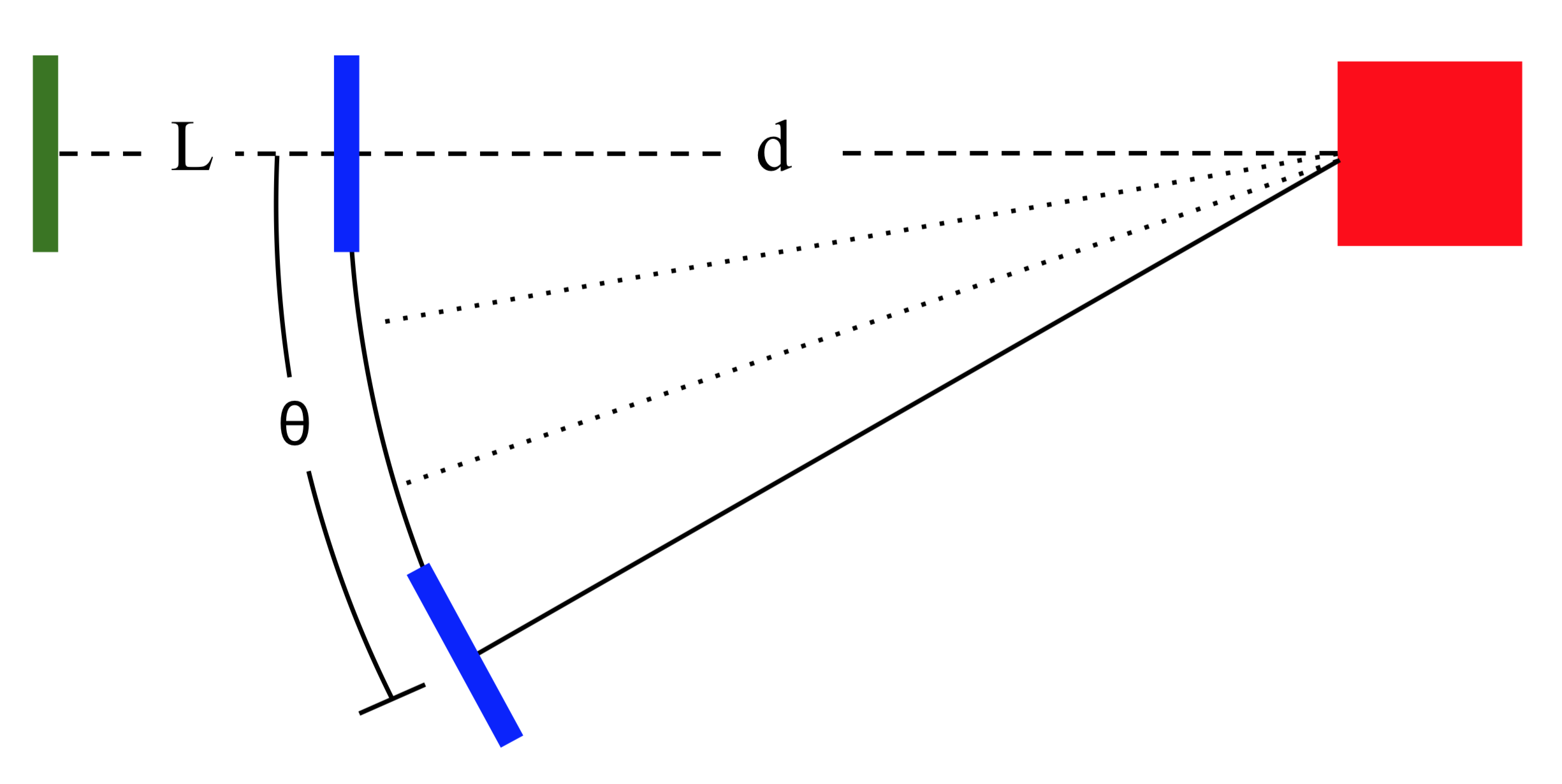

After devising a way to consistently transmit and receive ultrasonic audio signals, I built a number of test targets we used to recording sample data. These consisted of a 12” square acrylic target mounted 12” from the ground with acoustical foam placed above and below so that the returning echo would only return from the flat target.

Finally, with a second identical test target, we arranged a number of test configurations and recorded their echoes, varying the distances of each of the target (L, d) as well as the angular position of the first target. Each distinct configuration served as an input to further refine the reconstruction algorithm.

Soon after gathering and parsing through our data, our team and I wrote a paper we submitted to the IFAC Intelligent Autonomous Vehicles Symposium, held during the summer of 2019 in Gdansk, Poland.